Early this year (February 2021) Oracle announce full L2 support on OCI (you can read more here). But what does it mean? Why is really a big deal?.

Obviously all mayor competitor of Oracle are not really interested on speak about L2 support on the Cloud, but when you read a post of Ivan Pepelnjak speaking about it, and the challenges Oracle is facing (where many others already fail) give you an idea of how big is. (you can read it here)

Be able to virtualize L2 capabilities means that you wouldn't need to rewrite or re-achitect your applications to move them to the cloud, because the same L2 functionalities that you have on-premise will be available on the cloud.

Before this announce all major Cloud providers focus their effort in cloud native applications, what's great for those application, but... what happens with all non cloud native applications? and that's the key-point here, some of them will require re-engineering to be able of run in the cloud and it's what make OCI L2 support something "BIG".

To understand the limits of most of the L3 network virtualization implemented today in the cloud we need to deep dive into this technology (be aware that L3 virtualization implementation can differ form one provider to another, and some simplification will be done to show in a "easy way", how technology work. So, Let's start from the beginning ...

L3 virtualization on OCI:

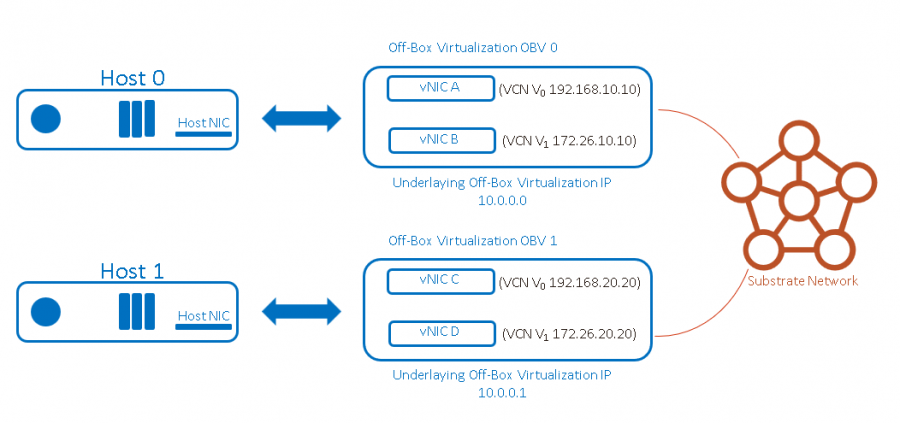

In OCI all compute server are connected to multiple "off-box virtualization devices", where the "magic" of the network virtualization happens. Those virtualization devices, obviously are connected to the physical network and has an IP to interact with the OCI management infrastructure. In the following post that is the source for the most of the technical content showed here (I really recommend to read it) this underlying network is called "substrate".

https://blogs.oracle.com/cloud-infrastructure/first-principles-l2-network-virtualization-for-lift-and-shift

When a customer lunch a instance, this instance has at least one virtual interface. The control plane of OCI for compute and Networking is able to track their location and assign a unique interface identifier for all virtual interfaces located in the same physical host.

At this point OCI can know in which physical host is located each of the virtual interfaces attached to the different customer shapes.

In order to use a IP belonging to the VCN (Virtual Cloud Network) of the customer, a call to an APIis done, so the virtual networking control plane can assign a IP to the vNIC and also be aware of this allocation.

At this point the control plane know wich vNIC is assigned to an specific customer IP and where (physical server) is located the mapping between vNIC and Physical NIC.

"The control plane pushes this information to the virtualization devices, and the data-path uses it to send and receive traffic on the virtual network. When a packet is sent from a virtual interface, the data-path looks up the virtual cloud network (VCN) associated with the sending interface. Then it retrieves a map between IP addresses in the VCN and the interface to which they’re assigned. Finally, it uses the destination virtual interface to determine how to encapsulate and send the packet.

When we encapsulate the packet, we wrap the original packet in a new packet header for sending on our substrate network. This packet header includes the physical network IP addresses and OCI-specific metadata, including the sending and receiving virtual interface IDs."

Two interesting things to remark:

- ".. the destination of the packet in the physical network is based entirely on the destination IP, the MAC is effectively immaterial."

- ".. the sender has to know what vNIC the destination IP is mapped to and where it exists in the physical network.

As you can see OCI don't need the MAC address to deliver packets to their destinations, so, what about ARP? how it's work in a virtual environment like OCI?.

ARP is used in IPv4 if source and destination are in the same broadcast domain to map an IP address to its MAC and deliver the packet. ARP is a L2 protocol.

Remember that OCI control panel know in advance the IPs associated to all vNICS, so, the virtualization stack can answer immediately to ARP request, even without leave the host.

OCI also do something really cleaver to flatten the network. Each occasion one VM want to send traffic to a destination in a different subnet, the VM ask (using ARP) for the IP of the default gateway. OCI control panel will answer with a pre-canned ARP reply in manner the packet is sent directly to the destination vNIC. The default gateway is an illusion, in fact doesn't exist, because will be different in each occasion and will be ever the destination vNIC.

In that way OCI eliminate one hop, and that's the reason because inside an Availability Domain there are no more than 2 hops between two VMs. As much flat is the net, less hops you need and better is your latency.

Most of applications works without problem in such type of scenarios, but others (for example many clusters of different solutions) need to use floating ip's, etc. and move IPs without notify the control through the APIs. In you have one of those applications and want to move it to the cloud, you have two options:

- Re-engineer the application

- Use OCI

Why some applications need L2 support to work?

Many application were written for physical networks and need to share a broadcast domain to work. Well, much of L2 functionalities are not supported in a L3 virtual network, because usually the control plane APIs of the cloud provider must be called to assign IPs to vNICS.

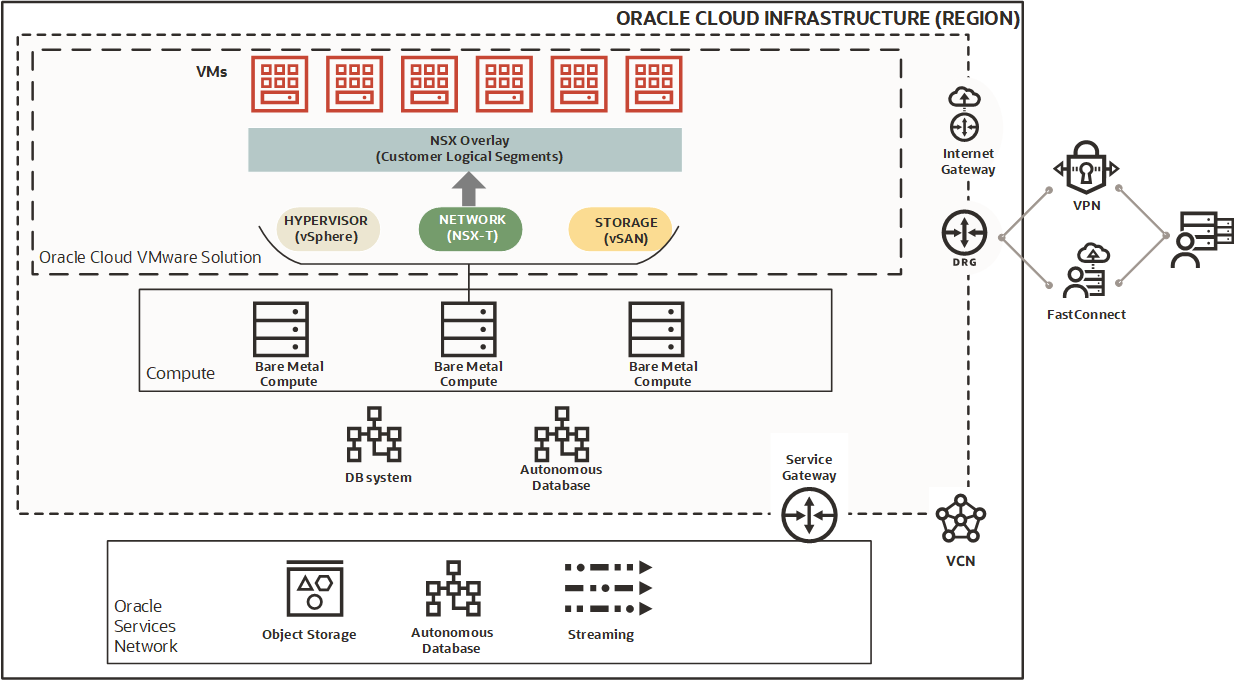

If you have a workload that assign IPs without call the cloud provider APIs, for example VMware, or a cluster with floating IPs than can move without notification to the control plane you need L2 support.

In which situations you need L2:

- If you want to assign MACs and IPs without using an API call: not only VMware or other hypervisors, also most of the network appliances like Firewalls, LoadBalancers, etc. need to do it.

- Low latency reassignment of MACs and IPs for high-availability and live migration: when a cluster want to move an IP from one node to another, or maybe in the event of a node fail, applications need to reassign IPs and MACs. Typically the new active node will send a GARP to reassign a service ip to its MAC or a Reverse ARP (RARP) to reassign as service MAC to its interface.

When we use vMotion (or equivalent) to live moving a VM from one host to another, the new host will (and must) send a RARP when the guest VM has migrated.

- Interface Multiplexing by MAC address: Hypervisors hosting multiple VMs on a single host need to deal with multiple IPs in the same interface and be ready to differentiated VMs by their MAC.

- VLAN Support: Some applications need (or maybe you want) to separate traffic in different VLANs

- Multicast & Broadcast traffic: ARP requires L2 but it's not the only one, there are others like Multicast or Broadcast

- Support for non IP traffic: in a typical cloud you need L3 to communicate between VMs and hosts. If you support L2 and VLANs you can use of course IPv4/6 but also IPX or any other.